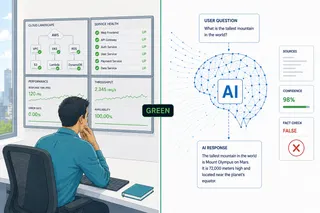

The Stack Is Green. The Agent Is Wrong.

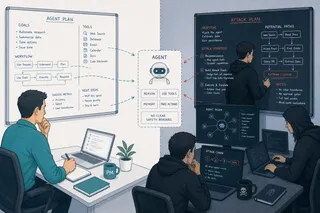

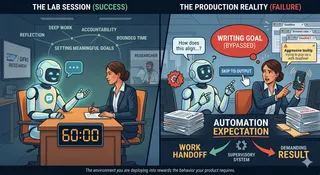

Your dashboards are green. Your agent approved 17 wrong purchase orders overnight. Traditional O&M answers "is it running?" Agentic O&M must answer "is it behaving correctly?" These are different questions. They require different instruments.