Change Management for Agentic AI - Designing the Human Side of the Transition

Most change management programs treat agentic AI as an adoption problem. The diagnosis is wrong. Change management is being treated as adoption when the real work is supervision. That is a different skill, and most teams are not designing for it.

The first time I put a patient under general anesthesia, I went into a state of panic. Once the patient was fully sedated, I realized his life depended on the perfect synchronization of the ventilator, the drip of IV fluids and drugs, the body temperature, blood loss monitoring, urine output, heart rate, and the precise height and tilt of the table that kept the surgeons focused and happy.

Over time, something shifted. It did not happen in a day. The ventilator cycled, the pressure held, and the numbers settled into a pattern I recognized. I stopped managing the systems and started managing the patient. The attention that was going into keeping him breathing was now free for something harder: what was the blood pressure trend telling me, what did the surgeon's body language say about what came next, was the temperature dropping faster than the case should allow.

What I learned in those years as a physician about automation, monitoring, and machine behavior followed me throughout my career as a product manager. It has never been more relevant than it is now, in the era of agentic system design.

That transition, from operating the tools to focusing on what the tools are serving, is the most consequential moment in the operating room. Every field that has put humans alongside autonomous systems has had to design for it. Most enterprise AI deployments have not.

The Problem That Comes After the Product

The previous articles in this series covered what it takes to build an agentic system well: the four runtime design artifacts, the evaluation frameworks, the six observation metrics that close the product lifecycle loop. The assumption running through all of it was that you had built the system. The agent was deployed. The evals passed.

Even then, the hardest problem had not started yet.

Deploying an agent that acts does not just change the software. It changes the job of every person who interacts with it. The procurement manager who used to process orders is now supervising the system that processes them. The compliance analyst who used to flag exceptions is now auditing whether the agent flagged the right ones. These are not the same jobs. They are new jobs wearing the old jobs' names.

Most change management programs treat this as an adoption problem: communicate the benefits, run training sessions, measure utilization. The diagnosis is wrong. Change management is being treated as adoption when the real work is supervision. The question is not whether people are willing to use the new system. The question is whether they know how to supervise it, which is a different cognitive skill from the one they had before.

Aviation Learned This the Hard Way

In December 1978, United Airlines Flight 173 ran out of fuel while circling Portland. The aircraft was mechanically sound. The crew knew the fuel state was critical. The captain, fixated on a landing gear indicator, could not process it. The accident report was not about mechanical failure. It was about a new kind of failure: a technically proficient crew that had never been trained to supervise an increasingly automated cockpit as a system.

The investigation produced what became Crew Resource Management. CRM does not teach pilots to fly. It teaches them to supervise: situational awareness across the whole system, communication across authority gradients, structured handoffs at every consequential transition. And critically, it is not a course. Early CRM leaders found that a single training event produced attitude shifts that faded within months. It became a recurrent practice, embedded in annual training and maintained through live flight audits in non-emergency conditions. You cannot certify a supervisory culture. You maintain it.

Aviation took forty years to get this right. Most enterprise AI teams treat it as something that can be resolved before go-live.

Two Channels, One PM

For product teams, the implication is structural, not tactical.

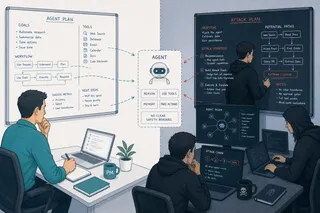

Every previous software product required one design channel: the user experience of the product. Agentic systems require two. The first channel is the agent: what it does, how its autonomy boundary is defined, what it logs, what it surfaces for review, how it handles errors. Most teams design this channel because it maps onto existing product practice.

The second channel is the human experience of supervising the agent: how a person knows what the agent is doing, how they develop the skills to catch its errors, how the supervisory role fits into existing workflows, and how they stay engaged rather than drifting toward passive monitoring. Most teams do not design this channel. They assume it will emerge from training and documentation. It does not.

The failure pattern is predictable. The agent performs well in the first weeks. Supervision feels active because the system is new. Then reliability builds. Attention drifts. The supervision becomes passive. A background failure accumulates until a supplier calls to ask about orders that never arrived. This is automation complacency not as a character flaw but as the predictable output of a supervisory role that was never designed.

The gain is real, though. When the transition works, the person who used to process orders now has attention for the supplier relationship, the exception that does not fit the system's model, the pattern across vendors no single transaction reveals. The gain from the actor-to-supervisor transition is not time saved on routine tasks. It is cognitive capacity freed for work that actually requires judgment. Designing for that gain is the second channel.

What Goes Wrong First

The first failure mode is not resistance. It is selective trust.

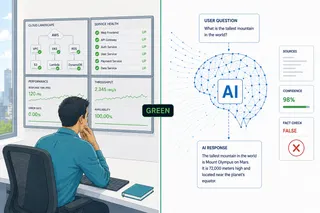

Workers do not reject AI wholesale. They trust it for low-stakes decisions and disengage from it for high-stakes ones, which is precisely backwards from where algorithmic assistance is most valuable. Research on algorithm aversion is clear: trust collapses after a single observed failure much faster than it accumulates through many correct outputs. The design response is not better communication. It is an interface that makes reliability visible, uncertainty legible, and errors catchable before they compound. You cannot communicate your way past algorithm aversion. You design past it.

There is an equal and opposite failure: automation bias. Under routine conditions, supervisors under-monitor. Under novel or high-pressure conditions, they either over-ride or blindly defer. Neither is effective supervision. The interface has to actively calibrate trust, not merely enable override.

The second failure mode is shadow workflows. When the supervisory role is poorly designed, teams maintain parallel manual processes alongside the agent. This is not sabotage. It is rational risk management by people who have not been given a supervision interface they trust. The cost is invisible to dashboards and substantial in practice. One recent analysis estimated that large enterprises lost, on average, tens of millions of dollars in 2024 from digital workflow inefficiencies linked to poorly integrated automation. The agent is deployed, the utilization metrics look acceptable, and the workforce is running a second process in parallel.

The third failure mode is slower and harder to reverse. When an agent takes over tasks humans used to perform, the capacity to perform those tasks and, more critically, to recognize when the agent performs them incorrectly, degrades over time. Aviation research found that basic procedural skills remained largely intact after extended autopilot use. What deteriorated were cognitive and strategic skills: situational awareness, the ability to recognize automation failures before they cascaded. The supervisor does not forget how to do the job. They forget how to think about the job. And unlike the first two failure modes, this one is invisible to the person experiencing it.

The Workflow Integration Principle

Good supervision does not require behavioral revolution. This sounds obvious and is routinely violated.

The supervision layer that does not fit existing cognitive patterns will be worked around, not adopted. The CRM tools that survived in aviation are the ones that slotted into moments that already existed in the workflow: repeating back a proposed action before execution, structured briefings before high-workload phases, the two-challenge rule that empowers any crew member to stop a decision they are concerned about. These are not new processes layered on top of flying. They are communication patterns inserted into transitions that were already there.

The design requirement is the same for enterprise agentic products: find where in the existing workflow the supervision moment naturally belongs, and build the interface there. The approval moment, the confidence signal, the override affordance — all of it should appear where the worker is already making decisions, not in a monitoring dashboard that competes with the actual work for attention.

One finding from clinical supervisory training is worth naming directly: structured supervision in surgical ICUs improved outcomes but increased the average duration of rapid response team events by 33 percent. Good supervision was not faster. It was more thorough. A temporary slowdown after agentic rollout is not a failure signal. It may be the correct signal that supervision is actually happening. The metric to watch is not speed. It is whether errors are being caught before they compound.

Accountability Is a Design Problem

A clinical decision support system analyzes a pathology result and returns no malignancy detected. The physician sees the recommendation, confirms it, and moves on. Eighteen months later the patient is diagnosed with stage IV cancer.

Who is responsible?

The vendor who built the model will point to the physician who approved the output. The physician will note that the system was cleared and integrated by the hospital. The hospital will reference the vendor's accuracy claims and the regulatory clearance. The regulator will note that the system performed within labeled parameters. Nobody is clearly culpable. The patient cannot get a legible explanation of why the model concluded what it did. And no one in the chain felt sufficiently obligated, at the moment that mattered, to question the recommendation.

This is not a hypothetical. It is the structure of every accountability failure in supervised AI systems. Andreas Matthias named it in 2004: the culpability gap, the public accountability gap, the active responsibility gap. Three distinct problems that require design responses, not policy documents.

Research shows that when AI is involved in consequential decisions, human supervisors feel less personally responsible for errors, even when they approved the action. The more automated the pathway, the more responsibility feels shared, and the less any individual feels obligated to catch errors. That diffusion is not fixed by naming someone in a governance document. It is fixed by designing a system where one named person has a legible view of what the agent decided, on what grounds, with what confidence, and carries that record into the outcome.

That legible view has prerequisites. The physician in the cancer case cannot exercise meaningful oversight without three things: external observability, meaning a real-time window into what the model is doing and what it is not doing; data lineage, meaning traceability from the output back to the training data, so the supervisor can ask whether this patient population was represented in the data the model learned from; and model transparency, meaning enough visibility into the reasoning to evaluate whether the conclusion follows from the evidence. Without these, the human is not supervising. They are witnessing. The difference matters the moment something goes wrong.

These are not research capabilities or regulatory aspirations. They are the minimum infrastructure for a supervision interface that is honest about what it is asking the human to do. A system that asks a physician to sign off on an AI recommendation without providing observability, lineage, and transparency is not keeping the human in the loop. It is assigning them liability for a decision they had no real capacity to evaluate.

An audit surface, a decision trail, and an override protocol, present before the first production incident. Not compliance artifacts. The technical conditions under which accountability is real rather than nominal.

The PM Who Designs Half a System

There is a practical test for whether a PM has designed both channels.

Ask for the override frequency metric: what percentage of agent actions are reviewed and modified by human supervisors, in which workflows, over what period. If the team cannot produce it, the approval moment was not designed to be logged. Ask for the supervision training design: not the completion rate, but whether the training includes practice managing the process when the agent is wrong or unavailable. If it does not exist, Channel 2 was left to HR. Ask whether the supervisory role fits inside the existing workflow or requires a separate practice. Shadow workflows leave no formal trace. They show up in the gap between what the utilization dashboard reports and what the work actually looks like on the floor.

The anesthesiologist who trusts the ventilator enough to focus on the blood pressure trend is not failing to supervise. She is doing the harder job that the trust made possible. The design question is how the system holds that trust stable, surfaces when it is eroding, and keeps the supervisory skill available for the moment it is needed.

You built the agent. The agent is working. Now design the person who is supposed to be watching it.

This article is part of an ongoing series on agentic AI product design, healthcare data, and the human side of technology adoption.