From Research to Reality: What It Takes to Scale Clinical AI Beyond the Lab

I still read NEJM and BMJ cover to cover. Lately they are filled with elegant AI breakthroughs: models that promise to transform diagnosis, prediction, resource allocation. The deeper I build enterprise software, the more critical I get. A paper is a demo. A scaled deployment is a different problem.

I left clinical practice for product management at a big tech company twice in my career, but Ive never really left medicine. Over the years, Ive developed an expensive reading habit: I still read NEJM and BMJ cover to cover, or at least scroll through every article, the way some people compulsively check news feeds. Its a ritual that keeps me tethered to the work I once did, a way of staying connected to the questions that first drew me to medicine.

Lately, these journals have been filled with AI breakthroughs. Elegant models that promise to transform how we diagnose disease, predict outcomes, and allocate scarce resources. Each paper seems more impressive than the last, and initially, I consumed them with the enthusiasm of someone watching their field finally catch up to the future.

But the deeper Ive moved into building intelligent software solutions for global deployment, the more critical Ive become. In enterprise software, we design for scale from day one. An inventory optimization model built for a warehouse in Singapore needs to work in São Paulo. A recommendation engine deployed for one retail chain should generalize to the next. The systems we build have to survive messy reality: incomplete data, varying workflows, different operational constraints, users who dont behave as predicted.

Yet most clinical AI research I encounter describes highly localized successes, optimized for a specific hospital, a particular patient population, a defined workflow. The papers are rigorous, the science is sound, but they leave a fundamental question unanswered: what happens when you try to move this from here to there?

My skepticism sharpened recently when I completed the Harvard Medical School Executive Education program on Healthcare Transformation and AI. The course reinforced something Id been sensing for a while: the gap between this works brilliantly at Hospital X and this can be deployed across health systems globally is wider than most publications acknowledge, and perhaps wider than most researchers realize. Its a gap I think about every time I read a promising AI paper now, and its one worth examining closely.

An Elegant Solution to a Real Problem

Consider a recent paper in BMJ Digital Health. Researchers from two Danish university hospitals developed an explainable AI model to predict which cardiac surgery patients will develop chronic kidney disease within three years. The clinical need is clear: CKD affects roughly one in seven cardiac surgery patients, often goes undiagnosed for months or years, and significantly increases the risk of cardiovascular events and death. Routine surveillance of all patients would be prohibitively expensive, but without it, many cases go undetected until substantial damage has occurred.

The model is remarkably simple. At discharge, it uses just four variables: baseline kidney function, the change in creatinine during hospitalization, age, and sex. These inputs feed into a transparent mathematical formula, no black box, no inscrutability, that stratifies patients into risk groups. The researchers found that by screening approximately 30% of patients flagged as high-risk, they could identify over 80% of future CKD cases. Among those screened, nearly one in three would ultimately develop the disease.

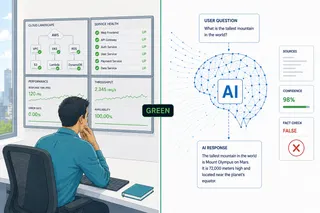

The model achieved strong performance metrics: an area under the curve (AUC) of 0.86 in internal validation, rising to 0.88 when tested at a second Danish hospital. The researchers used explainable AI rather than more opaque methods, making the model interpretable to clinicians. After some recalibration, it demonstrated good calibration alongside its discriminatory power. The work is careful, thorough, clinically sensible. The paper concludes that the model is ready for clinical implementation.

But heres the question that nags at me: ready for implementation where?

The Geography of Generalization

Could this model be deployed at Karolinska University Hospital in Stockholm tomorrow? Probably yes, with some work. Sweden and Denmark have comparable healthcare systems, similar cardiac surgery practices, overlapping patient demographics. The infrastructure would be familiar. Recalibration might be needed, but the fundamental assumptions would likely hold. Yet even within Denmark, the authors observed miscalibration before recalibration, underscoring how sensitive risk estimates can be to subtle population shifts.

What about Stanford Health or UCLA? Now the complexity multiplies. The US cardiac surgery population differs from Denmarks in consequential ways. Theres greater ethnic diversity, different baseline kidney function distributions, varying rates of diabetes and obesity. Surgical techniques and perioperative protocols differ. Most importantly, the follow-up infrastructure is fundamentally different. Denmarks integrated system ensures longitudinal tracking; American PPO fragmentation makes it optional and often incomplete. The model might still discriminate well, the AUC could remain high, but the predicted probabilities would almost certainly need recalibration. More fundamentally, the threshold for high risk might need redefinition based on local resource constraints and what the health system can actually absorb.

And what about Lagos University Teaching Hospital? Here, the generalization challenges compound. Different genetic backgrounds, different disease prevalence, different baseline kidney function. Resource constraints, variable perioperative protocols, potential gaps in lab systems and follow-up tracking. Different staffing models, different treatment availability. The models ability to rank risk appropriately might survive, but whether its predictions remain clinically meaningful, whether it works within existing workflows, whether the health system can operationalize its recommendations, these questions become increasingly uncertain.

Three Kinds of Generalization

This brings us to a framework thats often missing from clinical AI discussions. For a model to truly scale beyond its birthplace, it must generalize across three distinct dimensions:

- Statistical generalization: does the model maintain its discriminatory performance in new populations? This is what external validation typically tests. It asks whether the model can still separate high-risk from low-risk patients when applied to different data. Its necessary but insufficient.

- Clinical generalization: do the predictions remain clinically meaningful? Do risk thresholds correspond to actionable decisions? Does the model fail in ways that make clinical sense? This requires understanding not just whether the model works statistically, but whether its outputs can be safely trusted by clinicians operating under different constraints, different baselines of risk, different standards of care.

- Operational generalization: can the model survive real-world conditions? What happens when labs are delayed or missing? When workflows differ from the training environment? When follow-up capacity is limited? When data quality varies?

The Danish CKD model handles the first layer well, demonstrated by its strong AUC in external validation. It shows promise on the second, its variables make clinical sense and its predictions align with known pathophysiology. But the third layer, operational generalization, remains largely untested. And this is where most models quietly die in practice.

The Uncomfortable Trade-off

Heres a truth that makes everyone uncomfortable: making models generalizable often makes them less optimal for any single setting. The Danish researchers could have built a more complex model incorporating additional local variables, specific lab values unique to their systems, nuanced workflow markers. They might have achieved even higher performance at their home institutions. But such a model would be even harder to export.

This tension between local optimization and global generalization is fundamental. In machine learning, its well understood. In healthcare, were still grappling with it. Do we want 100 locally optimized models that work brilliantly in their home institutions, or 10 robust models that work reliably across diverse settings?

From a commercial and public health perspective, we need the latter. But from a research incentive perspective, we often get the former. Publishing requires novelty and impressive metrics. A model with an AUC of 0.90 that only works at one institution makes a stronger paper than one with an AUC of 0.82 that works across fifty hospitals. Yet the second is far more valuable to the world.

What Bridging the Gap Actually Requires

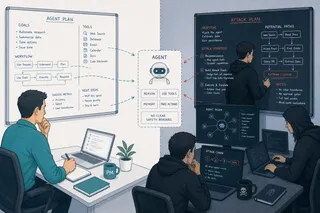

When I build enterprise cloud products, commercial readiness has a specific meaning. It means the product has been tested for robustness to variation, can gracefully degrade when conditions arent optimal, includes clear procedures for local adaptation, and has infrastructure for ongoing monitoring and recalibration.

It means asking whether the target system can absorb the alert workload, defining when the model should and shouldnt be used, building governance frameworks that establish accountability, and designing for resilience to real-world messiness rather than laboratory conditions.

The Danish CKD model has elements of this, particularly around explainability and clinical sensibility. But achieving true commercial readiness across diverse global settings would require multi-country validation, robustness testing under varying data conditions, workflow adaptation for different care delivery models, threshold optimization for different resource contexts, and continuous monitoring infrastructure that works across EMR systems.

This isnt a criticism of the research, to be clear. This is solid, rigorous work that advances the field meaningfully. The authors have demonstrated something important: that AI can identify high-risk patients using simple, interpretable variables, and that such identification can be done with impressive accuracy. This is exactly the kind of clinical AI we need more of.

But when the paper states the model is ready for clinical implementation, that phrase reasonably applies to similar clinical environments, not to the separate question of global commercial deployment. These are different stages requiring different work, different expertise, different resources. Recognizing this isnt diminishing the research, its clarifying what comes next.

The Hidden Infrastructure Problem

Theres another layer to this that rarely makes it into research papers: even if a model generalizes statistically and clinically, deployment still requires infrastructure that often doesnt exist.

Consider the Danish models elegant simplicity: four variables, available at discharge, feeding into a transparent formula. Beautiful in theory. But in practice, you need EMR integration to pull those variables automatically. You need a system to calculate the prediction and surface it to the right clinician at the right time. You need a workflow for what happens when a patient is flagged as high-risk, who sees the alert, who schedules follow-up, who ensures it actually happens.

In an integrated system like Kaiser Permanente or Denmarks national health service, this infrastructure might be achievable. In a fragmented American PPO environment where the cardiac surgeon, the primary care physician, the lab, and the patients insurance company barely communicate? The models statistical performance becomes almost irrelevant. The operational gap is simply too wide.

What This Means Going Forward

I see this playing out across clinical AI. Brilliant research models that work in one setting, struggle to scale to another, die quietly when confronted with operational reality. Not because the science is wrong, but because we havent built the bridges between research validation and global deployment.

We need those bridges. That means researchers thinking earlier about generalization, even if it means accepting slightly lower local performance. It means health systems investing in the unglamorous work of adaptation, integration, and maintenance. It means technology companies bringing commercial discipline while respecting clinical constraints they may not fully understand.

Most importantly, it means honest conversations about where models actually work and where they dont. Not every promising research model needs to become a global product. But for the ones that should, we need realistic timelines, adequate resources, and clear success criteria for each stage of generalization.

The Danish CKD model represents real progress. The challenge now isnt just building more models like it. Its building the infrastructure, processes, and incentives to take the best ones from works here to works everywhere that needs it.

Thats the gap I think about every time I read a promising AI paper. And its the gap that will determine whether this wave of clinical AI delivers on its promise or remains a collection of impressive but isolated demonstrations.

This article discusses research published in: Lindhardt RB, Rasmussen SB, Machado M, et al. Development and evaluation of an AI-based prediction model for chronic kidney disease after cardiac surgery. BMJ Digital Health 2026;2:e000172.