The Long Game: Why Healthcare's AI Future Depends on Attention Span

The most important AI benchmark you have never heard of measures one thing: how long can an AI system work on a task before it loses the thread? In healthcare, that matters more than single-answer accuracy. A sepsis protocol is a multi-hour trajectory, not a prompt. Attention span is the real gate.

The most important AI benchmark youve never heard of measures something simple: how long can an AI system work on a task before it loses the thread?

Not how smart it is. Not how accurate. How long it can maintain coherent reasoning without breaking down, forgetting context, or confidently heading in the wrong direction.

Think of it as attention span for artificial intelligence.

This capability, tracked by researchers at METR, a research group that emerged from ARCs evaluation work, has a technical name: long-horizon task completion. Its measured by mapping AI task success to how long the same task takes a human expert. And according to their latest findings, its improving faster than anyone expected.

When METR published their research in March 2025, the most advanced AI (Claude 3.7 Sonnet) could reliably complete tasks that take humans about ten minutes. The latest data from December 2025 is stunning: the most advanced AI (GPT-5.2) has reached double what we anticipated, capable of handling tasks that take humans 55 minutes with high success rates. The pace has accelerated beyond even optimistic projections.

For most industries, this is interesting. For healthcare, its existential.

The Attention Span Problem

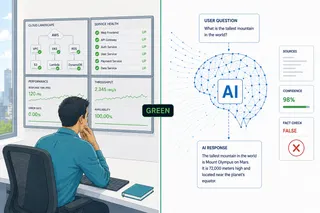

Heres the uncomfortable truth about most AI deployments in healthcare today: they succeed because theyre solving problems someone with the attention span of a four-year-old could handle.

Draft a patient summary? Seconds of sustained attention. Flag a suspicious image? A momentary focus. Suggest a diagnosis from symptoms? A single burst of reasoning.

These are valuable tasks. They save time. They reduce errors. But they dont require what medicine actually demands: the ability to maintain focus, context, and responsibility across hours or days of evolving uncertainty.

Most AI pilots can thrive with the attention span of a toddler because most pilots are designed around tasks that fit a toddlers attention span.

The hard problems in healthcare require something fundamentally different. They require the attention span of the clinicians were trying to support.

Why Attention Span Matters Now

Consider three scenarios where the attention span gap becomes visible.

AI Scribes That Suggest Orders: The 15-Minute Test

Epics newer AI charting features, and similar tools from other vendors, are moving beyond transcription toward suggesting documentation elements and potential next steps, including draft orders in some configurations.

A simple scribe can transcribe words chronologically: the patient said this, the doctor said that. Thats recording, not reasoning.

But suggesting orders requires something different. The system must track the complete patient interaction, understand which pieces connect to which clinical decisions, recognize when the doctor is thinking out loud versus making a commitment, distinguish what was ruled out from what was confirmed, and place information where it needs to be, not just summarize chronologically.

A patient might mention chest pain at minute three, describe it as sharp at minute seven, clarify it happens with deep breaths at minute ten, and the doctor might circle back to rule out cardiac causes at minute thirteen. To suggest the right orders, the AI needs sustained attention through all fifteen minutes, connecting scattered pieces and understanding the evolving clinical picture.

Thats sustained clinical attention at the simplest level. Just fifteen minutes. But it already eliminates most AI systems deployed today, which lose the thread after a few exchanges.

Autonomous Surgery: The Attention Span of a Neurosurgeon

When people hear autonomous surgery, they imagine a single impressive action: a precise cut, a perfect suture. Those are short-horizon tasks. Current autonomous surgical systems demonstrate task-specific autonomy, not full procedural autonomy.

But surgery is not a maneuver. Its a process unfolding over hours.

A neurosurgeon maintains a coherent mental model of what theyre doing, why, what could go wrong, and how the current moment connects to everything before and after. That cognitive thread stays unbroken for four, six, sometimes twelve hours.

A truly autonomous surgical system would need the same attention span. It would need to interpret pre-operative imaging, adapt the plan once the body is opened, respond to unexpected anatomy, manage bleeding and tissue variability, coordinate multiple sub-procedures in sequence, pause and re-plan when uncertainty increases, hand control back to humans when thresholds are crossed, and document decisions.

The most dangerous moments arent when the system is wrong. Theyre when its confidently wrong and keeps going because its lost the thread. What kills patients isnt a single bad prediction. Its slow bleeding that goes unnoticed because attention drifted, small deviations that accumulate because context was lost, delayed recognition because the system forgot what normal looked like three hours ago.

These are attention span failures.

Longitudinal Inpatient Care: The Attention Span of an Internist

Consider a patient admitted with heart failure, chronic kidney disease, infection, diabetes, and polypharmacy. This is standard for medicine. Its also where the limits of short-attention-span AI become painfully obvious.

An experienced internist doesnt just see todays labs. They remember yesterdays trajectory, last weeks response to treatment, the patients baseline from six months ago. They maintain a running mental model that updates continuously over days, sometimes weeks.

Thats sustained clinical attention.

No single decision determines the outcome. The outcome emerges from dozens of small, interacting choices over days: diuretics adjusted, renal function drifting, blood pressure responding non-linearly, labs lagging behind physiology, symptoms improving before biomarkers, side effects appearing quietly.

This requires the attention span to track multiple threads simultaneously, maintain context across shift changes, remember which assumptions are still valid and which have been proven wrong, and resist starting fresh each morning as if the previous three days didnt happen.

Most current clinical AI operates with toddler-level attention spans: predict sepsis risk at six hours, flag readmission risk at discharge, recommend a dose adjustment now. Each may be locally accurate. None maintains responsibility for the trajectory.

Clinicians rarely act on a single data point. They wait for quorum: labs, vitals, imaging, symptoms, response to treatment, trajectory. Diagnosis is not a moment. Its a convergence that happens over time.

A long-attention-span AI wouldnt aim to replace judgment. It would support quorum formation over time, tracking which hypotheses are still alive, noticing which explanations are weakening, recognizing when data is contradictory rather than additive, and surfacing when enough evidence exists to act, and critically, when it does not.

In medicine, restraint is often the safest intervention. But restraint requires the attention span to remember why youre waiting.

The Curve Is Steeper Than Expected

METRs research measures how many steps an AI system can work through before its reasoning breaks down.

Early language models had the attention span of a distracted toddler: perhaps a dozen connected actions before losing coherence. The latest systems? On the order of hundreds of steps, corresponding to tasks that take humans from tens of minutes to hours, depending on domain.

The improvement curve is steeper than expected.

This matters because healthcare operates on a fundamentally different timescale than most AI applications. Medical care unfolds across hours, days, sometimes weeks, demanding sustained attention that never wavers.

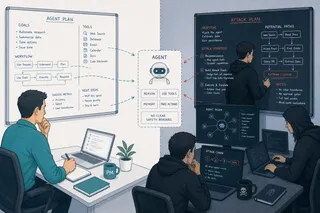

Short-attention-span AI can identify anatomical landmarks, classify tissue types, predict outcomes given fixed assumptions. Long-attention-span AI must maintain coherent goals across many steps, recover from errors instead of compounding them, preserve context across interruptions, reason about when not to act, and recognize when it no longer understands the situation.

The future of clinical AI isnt about systems that always know what to do. Its about systems that maintain enough attention to know when they dont know, and can hold that self-awareness steady over time.

Why Healthcare Needs Grown-Up Attention Spans

Most industries can tolerate AI systems that work in bursts. An email gets drafted. A report gets generated. If its wrong, someone catches it. The stakes are manageable.

Healthcare doesnt have that luxury.

Medical decisions cascade. Small errors compound. Delayed recognition kills. You cannot hand off responsibility every few minutes and expect safe care.

And critically, you cannot simulate the complexity of sustained clinical attention convincingly enough to prove safety. You can simulate lab values and outcomes. You cannot simulate what it feels like to maintain clinical responsibility for hours, to track dozens of threads simultaneously, to remember the subtle detail from three hours ago that suddenly becomes critical.

Simulation strengthens development and testing, but it cannot substitute for proving that a system can sustain safe behavior over time in the real world.

The Gap Is Closing

Heres what makes the METR findings significant: the attention span gap between current AI and clinical utility is narrowing faster than regulatory, ethical, and validation frameworks can adapt.

Were approaching a threshold where AI systems could theoretically maintain attention across a fifteen-minute encounter, surgeon-level focus across a full procedure, or internist-level attention across a multi-day hospital stay. Not perfectly. Not autonomously. But with enough sustained attention to assist, monitor, suggest, and escalate appropriately.

This is fundamentally different from the prediction-focused, attention-deficit AI tools we have today.

Healthcare has always understood intuitively that sustained attention matters. Thats why we round. Thats why we reassess. Thats why diagnoses evolve. Medicine isnt a series of isolated moments. Its a continuous process of observation, hypothesis refinement, and course correction that demands unbroken attention.

AI is finally beginning to match that attention span.

What Comes Next

Were watching two curves converge: AIs ability to sustain attention over time is accelerating, while healthcares tolerance for AI with grown-up attention spans is cautiously increasing.

The systems well see in the next few years wont replace clinicians. Theyll work alongside them with the attention span to maintain context across shifts, track subtle changes that humans miss in the noise, surface patterns that only become visible when youve been paying attention for days, and escalate appropriately because they havent forgotten what happened six hours ago.

The future of clinical AI isnt about better answers. Its about systems with the attention span to wait, watch, remember, and know when enough is enough.

Thats not artificial intelligence in the flashy sense. Its artificial patience backed by sustained attention. And in medicine, that combination isnt just a virtue. Its the foundation of safety.

The technology is getting there faster than we thought. AI systems are growing up, developing the attention spans that clinical work demands.

The question is whether were building the frameworks, the validation methods, and the trust structures to deploy them responsibly.

Because in healthcare, having the attention span of a gifted four-year-old isnt good enough, no matter how gifted. We need AI with the attention span of the clinicians we trust with our lives.

And for the first time, were close enough that not yet is starting to sound different from not ever.

For more on METRs research on long-horizon task completion, see: https://metr.org/blog/2025-03-19-measuring-ai-ability-to-complete-long-tasks/