Why Healthcare AI Must Be Multimodal: We Don't Diagnose with Single Data Points

A 57-year-old liver transplant patient arrives confused and lethargic, with asterixis and ascites. No experienced clinician makes a diagnosis from one signal, a single heart rate, a single lab, a single image. But much of healthcare AI is still built that way. The clinical reality is multimodal.

A 57-year-old man arrives at the emergency department confused and lethargic. His family mentions hes had a liver transplant. Hes drowsy, mildly tachycardic, has asterixis, and large-volume ascites.

No experienced clinician looks at just one piece of this puzzle. We dont stop at the heart rate. We dont diagnose from the ascites alone. We certainly dont make treatment decisions based solely on a single lab value.

Instead, we integrate: the patients appearance and level of consciousness, the conversation with family, the physical exam findings, the medical history of transplant and cirrhosis, the lab results showing anemia and elevated inflammatory markers, the imaging confirming ascites, and finally the paracentesis revealing turbid fluid with elevated white cells.

Each piece refines our understanding. The C-reactive protein elevation suggests infection, but its the combination with mental status changes, asterixis, and ascitic fluid analysis that leads us to spontaneous bacterial peritonitis with hepatic encephalopathy. Remove any single modality, and the picture becomes less clear, the diagnosis less certain, the treatment less targeted.

This is how medicine works. This is how it has always worked.

The Multimodal Reality of Clinical Practice

Every clinical decision is inherently multimodal. We synthesize information from:

Visual assessment: How does the patient look? Are they comfortable or distressed? Whats their color, their breathing pattern, their level of engagement?Conversational data: What are the symptoms? How long have they persisted? What makes them better or worse? Whats the patients understanding and concern?Historical context: Previous diagnoses, medications, procedures, family history, social determinants, baseline functionPhysical examination: Vital signs, auscultation, palpation, specific maneuvers that reveal pathologyLaboratory data: Biochemical markers, cell counts, cultures, genetic informationImaging: Structural and functional visualization across multiple modalitiesSpecialist input: Consultation notes, pathology reports, radiology interpretationsTime-series patterns: How have these values changed? Is the trajectory improving or worsening?

- Visual assessment: How does the patient look? Are they comfortable or distressed? Whats their color, their breathing pattern, their level of engagement?Conversational data: What are the symptoms? How long have they persisted? What makes them better or worse? Whats the patients understanding and concern?Historical context: Previous diagnoses, medications, procedures, family history, social determinants, baseline functionPhysical examination: Vital signs, auscultation, palpation, specific maneuvers that reveal pathologyLaboratory data: Biochemical markers, cell counts, cultures, genetic informationImaging: Structural and functional visualization across multiple modalitiesSpecialist input: Consultation notes, pathology reports, radiology interpretationsTime-series patterns: How have these values changed? Is the trajectory improving or worsening?

We dont think of this as multimodal decision-making. We just call it medicine.

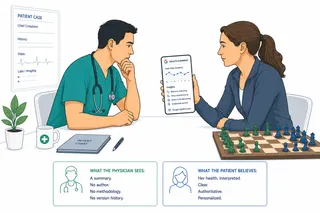

The Single-Modality AI Paradox

Yet much of healthcare AI has been built around single modalities. An algorithm that reads chest x-rays. A model that predicts sepsis from lab values. A system that analyzes ECG waveforms.

These tools can be impressive within their narrow domains. But they fundamentally misunderstand how clinical decisions actually happen.

When we deploy a model that only sees laboratory results, were asking it to make predictions without the context that makes those results meaningful. A potassium level of 6.0 mmol/L means something very different in a patient on dialysis versus a patient with normal renal function taking an ACE inhibitor. The lab value alone doesnt tell you which scenario youre facing.

When we build an image classification model that only sees radiographs, were stripping away the clinical context that determines whether a finding matters. A small pulmonary nodule has different implications for a 30-year-old nonsmoker versus a 70-year-old with a smoking history. The image alone doesnt provide that context.

Why This Matters Now

A recent comprehensive review in The Lancet Digital Health examined the promises and challenges of multimodal AI in healthcare.[1] The authors, drawing from a symposium at the University of Torontos Temerty Centre for AI Research and Education in Medicine, make a compelling case: if AI is to truly support clinical decision-making, it must work the way clinicians work, integrating diverse data types to understand the complete clinical picture.

This isnt just about making AI more sophisticated. Its about making AI clinically relevant.

The paper describes multimodal AI as systems capable of processing and integrating diverse data types: text, images, tables, time series, video, and audio. In other words, the same range of information that clinicians naturally integrate.

The Clinical Advantages Are Clear

Multimodal AI can:

Compensate for missing data: When one diagnostic modality isnt available, perhaps avoiding radiation exposure in a pediatric patient, the system can leverage other data sources to maintain diagnostic accuracy.

Identify patterns across modalities: The relationship between adventitious breath sounds, patient history, and physical examination findings provides more diagnostic information than any single element alone.

Adapt to clinical workflow: Healthcare data doesnt arrive all at once. Vital signs come first, laboratory results hours later, imaging the next day. A multimodal system can provide incremental predictions as each new data type becomes available, matching the reality of how clinical information accumulates.

Reduce cognitive blind spots: Humans have cognitive biases and limitations in processing multiple simultaneous data streams. AI systems can continuously monitor and integrate diverse data points that clinicians cannot cognitively process in real time.

Enable true personalization: Combining demographics, social determinants, immune status, genomics, imaging, and pharmacogenetics allows for genuinely individualized risk prediction and treatment optimization, precision medicine as it was originally envisioned.

But the Challenges Are Substantial

The Lancet review doesnt shy away from the obstacles, and theyre significant:

Data integration complexity: Laboratory results, radiology images, clinical notes, and waveform data have fundamentally different structures, sampling frequencies, and quality standards. Each modality requires different encoding methods, and fusing them while preserving complementary information is technically complex. The paper describes three main fusion approaches: early fusion (combining after encoding), joint fusion (iterative encoding with feedback), and late fusion (separate models then integration), each with distinct trade-offs.

Temporal and missing data problems: Data arrives at different times based on physician decision-making. Demographics are recorded once, vital signs hourly, labs daily, imaging occasionally. The authors emphasize that missing data is the rule, not the exception, and systems must handle this gracefully. Multimodal models trained on small datasets tend to over-rely on a single modality, requiring techniques to force consideration of all available data.

Validation and generalization: Traditional metrics dont capture whether models integrate information appropriately, especially when modalities provide discordant information. The risk of model drift increases with each added modality. External validation is particularly challenging across institutions with different populations, equipment, and protocols.

Explainability: The paper distinguishes between explainable AI (requiring post-hoc explanation models) and interpretable AI (inherently understandable). For multimodal systems, showing which elements from which modalities drove predictions and how they interact is essential but technically difficult.

What Multimodal AI Cannot Learn

The paper touches on something fundamental: no matter how many data types it processes, multimodal AI cannot learn the hesitation in a patients voice, changes in family dynamics, the story that doesnt fit the data, or clinical intuition from thousands of similar cases. Medicine is part science, part art. AI can excel at the science while the art remains elusive.

This doesnt diminish its value, it clarifies its role as a tool that processes structured data to identify patterns humans miss, not a replacement for human judgment about which patterns matter for this particular patient.

The Implementation Reality

The paper identifies critical barriers beyond technical challenges:

Infrastructure: Most healthcare systems struggle with basic interoperability. Real-time multimodal data processing remains a substantial hurdle despite advances in data standards.

Clinical burden: Systems must reduce, not increase, workload. Interfaces need to fit naturally into existing workflows.

Equity concerns: Models trained on well-resourced institutions may perform poorly where certain modalities are collected less frequently or with lower quality. The authors emphasize that some populations, particularly children, may not benefit fully due to scarce pediatric data and smaller market incentives.

Global disparities: Regulatory frameworks, workflows, and care quality vary substantially worldwide. Implementation requires international collaboration that respects local data sovereignty while leveraging diverse expertise.

The Path Forward

Clinical decision-making is multimodal by nature. If AI is to support these decisions, it must be multimodal too. But not just multimodal in the technical sense of processing multiple data types. Multimodal in the clinical sense of integrating information the way experienced clinicians do, with appropriate context, reasonable uncertainty, clear explanation, and respect for what cannot be captured in data.

The Lancet paper makes this clear: the technical challenges are substantial but surmountable. The real question is whether we can build systems that are both technically sophisticated and clinically useful.

This requires collaboration between clinicians who understand how decisions are actually made, engineers who can build robust multimodal systems, and health systems willing to invest in proper implementation.

It also requires humility. The technology changes daily. What works today may not work tomorrow. And the consequences of getting it wrong arent measured in model performance metrics, theyre measured in patient outcomes.

Thats why it matters that we get this right. Not just technically right, but clinically right.

Because at the end of the day, that 57-year-old man with confusion and ascites doesnt need an AI system that processes multiple data types. He needs an AI system that helps his clinical team make better decisions by integrating information the way good clinicians naturally do, thoroughly, thoughtfully, and transparently.

Thats the promise of multimodal AI in healthcare.

The question is whether we can deliver on it.

References

[1] Azarfar G, Naimimohasses S, Rambhatla S, et al. Responsible adoption of multimodal artificial intelligence in health care: promises and challenges. Lancet Digit Health 2025; 7: 100917. https://www.thelancet.com/journals/landig/article/PIIS2589-7500(25)00099-8/fulltext