Where Is A.I. Taking Healthcare?

The New York Times asked eight leading thinkers where A.I. is headed. Most rated its near-term medical impact as small or moderate. They were looking at the wrong scale. The revolution in healthcare A.I. is already here; it just looks like a discharge summary generated in seconds, not hours.

The Promises, the Pitfalls, and the Patients Caught in Between

When the New York Times recently asked eight leading thinkers where A.I. is headed over the next five years, the healthcare discussion was surprisingly muted. Most panelists rated A.I.s near-term medical impact as small or moderate. Cognitive scientist Gary Marcus noted that beyond documentation and a handful of narrow deployments, large-scale clinical impact has been limited so far. Nick Frosst, co-founder of A.I. startup Cohere, was more optimistic about reducing doctors workloads but bluntly skeptical about autonomous drug discovery: People will probably be disappointed. And philosopher and A.I. critic Yuval Noah Harari offered a darker warning, predicting that the rapid changes of the A.I. revolution are likely to cause a mental health crisis as humans struggle to adapt: We are about to conduct the biggest psychological experiment in human history, on billions of human guinea pigs, and nobody can predict what the results will be.

But heres what makes healthcare different from every other industry A.I. is touching right now: the stakes arent quarterly earnings or productivity metrics. Theyre lives. And when you zoom in from the big-picture futurism to the actual hospital floors, therapy sessions, and rural clinics where A.I. is landing, the picture gets a lot more interesting - and a lot more complicated - than the headlines suggest.

The Stethoscope Is Getting Smarter (But It Still Needs a Doctor)

The numbers are striking. In narrow, task-specific studies, A.I. systems have detected lung nodules with reported accuracy rates around 94%, outperforming human radiologists in those controlled settings - though performance varies widely by dataset and task definition. Johns Hopkins is using A.I. predictive analytics to forecast disease progression, and Mount Sinais A.I.-powered ICU system is flagging patient risks while reducing false alarms.

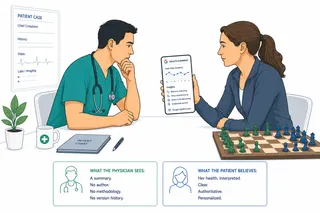

But a recent randomized controlled trial found something paradoxical: in controlled vignette-based studies, an A.I. chatbot outperformed physicians in diagnostic reasoning tasks, yet when doctors used it as a clinical aid, it didnt consistently improve their performance or save time compared to traditional tools. Brilliant in isolation, less useful in practice.

Thats the recurring theme. The technology impresses in controlled settings, but integrating it into how doctors actually think and work remains the hard part. And theres a real risk that better detection could overwhelm healthcare systems with more follow-up tests than the system can handle.

Your Hospitals Biggest A.I. Win Might Be Boring Paperwork

If A.I. is already delivering undeniable value in healthcare, its in the unglamorous world of clinical documentation and administration.

Kaiser Permanente deployed Abridges ambient documentation across 40 hospitals and 600+ medical offices, among the largest generative A.I. rollouts in healthcare history. Early pilots report documentation time reductions of 30-50%. Mayo Clinic is investing more than $1 billion across 200+ A.I. projects. These arent experiments anymore.

The early results are tangible: hospitals report improving administrative task completion by roughly 38%, and one pilot reduced documentation time from two hours to fifteen minutes. When doctors spend less time typing into records, they spend more time looking patients in the eye.

Nick Frossts prediction from the Times roundtable, that A.I. will become boring in the best way, is already coming true in hospital back offices. The revolution is a nurse who goes home on time because A.I. handled the discharge summary, a prior authorization processed in minutes instead of days. And notably, the majority of healthcare generative A.I. investment is currently flowing to startups rather than incumbents. In healthcare A.I., being purpose-built matters more than being big.

The Therapist in Your Pocket: Hope and Hazard

Perhaps no area of healthcare A.I. sits on a sharper knifes edge than mental health. Globally, one in eight people is affected by a mental health condition, with fewer than five professionals available per 100,000 people. In the U.S., there are roughly 1,600 patients with depression or anxiety for every available provider.

The first clinical trial of a generative A.I. therapy chatbot, Therabot, published in NEJM AI in early 2025, showed clinically meaningful symptom reduction over eight weeks, with a 51% average decrease in depression scores, approaching effect sizes seen in some forms of outpatient therapy. But the sample was modest, the duration short, and only 16% of studies on A.I. therapy chatbots have undergone rigorous clinical efficacy testing. Effect sizes for chatbot interventions remain significantly lower than traditional psychotherapy overall.

Yuval Hararis warning bears repeating here: The danger isnt that these tools exist - its that economic pressure could normalize the idea that human connection is optional in mental health care.

Personalized Medicine: The Promise Thats Still Loading

The vision is seductive: A.I. that analyzes your genetics, medical history, and lifestyle to craft a treatment plan uniquely yours. Were getting closer, but were not there yet.

Predictive analytics are already helping hospitals anticipate which patients will deteriorate and which treatments will work for specific profiles. But personalized medicine is only as good as its data, and healthcare data is notoriously fragmented and biased. If training datasets dont represent diverse populations, personalized medicine risks becoming personalized for people who look like the training data medicine.

As Melanie Mitchell cautioned: A.I. still cant ask the right questions, understand data in different contexts, or judge when a pattern matters. Personalized medicine powered by A.I. will be transformative, but it needs human wisdom as its operating system.

The Equity Question: Will A.I. Close the Gap or Widen It?

This might be the most important question in healthcare A.I., and the one getting the least attention.

The optimistic case is compelling. In selected rural pilots, A.I. tools have reduced time to diagnosis by 20-35% and increased early disease detection in low-resource settings. Mercy integrated A.I. diagnostics across 50+ hospitals in underserved areas, and drone programs are delivering medications across rugged terrain in under 30 minutes. For communities facing a projected shortage of up to 104,900 physicians by 2030, these could be lifelines.

But rural communities often lack the broadband infrastructure cloud-based A.I. requires. Under-resourced clinics cant afford the technology or training. As one health equity officer put it: Its going to be difficult to advance AI in these communities without some sort of equity allocations.

A.I. doesnt inherently close gaps or widen them - the choices we make about how to deploy it do.

So Where Is A.I. Actually Taking Healthcare?

If you step back from the hype and the panic, a clear-eyed picture emerges.

A.I. is already making hospitals run more efficiently and freeing clinicians from the paperwork that burns them out. Thats real, thats happening now, and it matters.

A.I. diagnostics are impressive in narrow tasks but still struggling to integrate seamlessly into clinical practice. The tools are ahead of the workflows.

A.I. therapy tools show genuine promise for expanding access to mental health support, but theyre nowhere near replacing human therapists - and we should be cautious about letting economics push us in that direction.

Personalized medicine is the long game, and itll only work if we solve the data diversity problem first.

And health equity - the question of who benefits from all of this - is the one that will determine whether A.I. in healthcare is remembered as a great equalizer or another chapter in the story of who gets left behind.

Helen Toner, from the Times roundtable, offered perhaps the most useful framework for thinking about all of this: A.I. systems that can clearly contribute at the cutting edge of multiple scientific fields, but that you still wouldnt trust with planning summer camp for your kid. In healthcare terms, that means an A.I. that can spot a tumor on a scan better than a tired radiologist at 2 a.m. - but that you wouldnt trust to sit with a grieving family and explain what comes next.

The technology is moving fast. The harder question - the human question - is whether well be wise enough to use it well.

Inspired by Where Is A.I. Taking Us?, The New York Times Opinion, featuring insights from Melanie Mitchell, Yuval Noah Harari, Carl Benedikt Frey, Gary Marcus, Nick Frosst, Ajeya Cotra, Aravind Srinivas, and Helen Toner.