What Physicians Know That Cannot Be Written Down

AI was trained on what physicians write. Not on how they reason. The note comes hours after the decision, shaped by billing codes and fatigue. What actually saved the patient was never recorded.

The most critical moment in an anesthesia case doesn't look like a decision.

It looks like a hand moving.

The blood pressure softens. The ETCO2 begins to fall. The pleth thins. The pulse oximeter starts to wander at the edge of signal quality. The anesthesiologist's hands are already responding before the conscious mind has assembled the differential diagnosis. There is no deliberate reasoning step. There is no internal monologue. There is pattern recognition so refined by consequence that it operates below the threshold of language.

I watched this scene play out hundreds of times: in the operating room, the ICU, the emergency department. An experienced physician would identify and respond to a situation while the interns and residents were still trying to understand what they saw on the monitor.

Later, in the recovery area or at the nursing station, the physician will write a note. It will say something like: "Intraoperative hemodynamic instability, managed with vasopressor support."

That sentence is true.

It contains almost nothing about what actually happened.

We trained clinical AI on that sentence. On millions of sentences like it. And then we wondered why it struggles.

How physicians actually reason

Clinical reasoning in medicine is not one thing. It is at least six interwoven mechanisms, and the most powerful of them cannot be put into words.

Illness scripts and pattern recognition. Experts see a cluster of cues and instantly activate a complete disease model (predisposing factors, likely course, expected findings) before deliberate analysis begins.

Dual-process reasoning. System 1 is automatic and pattern-driven; System 2 is deliberate and analytic; expert System 1 is not fast sloppiness, it is years of organized experience compressed into near-instantaneous recognition.

Hypothetico-deductive reasoning. The formal differential diagnosis: gather data, generate hypotheses, test and revise; most useful when no pattern immediately fits.

Bayesian reasoning. Each new finding updates the probability of each diagnosis; a test is only worth ordering if its result could actually change what you do.

Heuristics and safety rules. "Assume pulmonary embolism until proven otherwise." These consequence-weighted shortcuts are embedded by training to protect against catastrophic misses, even when the real underlying reasoning was a rapid gut pattern.

Collaborative and distributed reasoning. Modern diagnosis is not a solo act; cognitive work is distributed across teams, imaging, labs, protocols, and AI tools.

These frameworks interleave in real time, within a single patient encounter. The written note captures none of the movement.

What we actually trained AI on

Here is the problem that most AI-in-medicine discourse sidesteps.

The training data is not just temporally displaced from the reasoning. It is structurally corrupted.

Clinical notes in the United States are, on average, four times longer than notes written by physicians in other developed countries. That length is not because American medicine is more complex. It is because US documentation requirements are shaped by billing codes, malpractice liability, and compliance mandates that have nothing to do with clinical reasoning. A physician writing "Patient denies chest pain, shortness of breath, palpitations" may be documenting a billing-required review of systems, not a clinical finding that influenced the diagnosis. Direct audits have found that roughly half of emergency department note content is inaccurate when checked against what actually happened in the room: findings recorded that were never assessed, assessments made that never occurred.

Note-writing, it turns out, is retroactive synthesis, not transcription. The physician finishes seeing the patient, forms a diagnostic impression, and then constructs a note that presents the reasoning as if it were sequential and analytic. The document is a reconstruction of a process that was largely non-verbal and non-sequential. A further fraction is not even original, copied from previous notes, sometimes days old, sometimes describing a clinical state that no longer exists.

This is the text corpus that taught clinical AI to reason.

A July 2025 study in Nature Communications found that LLM-generated clinical notes contain 74 percent higher fatigue signals than human-written notes. The finding is usually discussed as a documentation quality problem. It is also a training data problem: the model learned the exhausted documentation style, not the alert clinical mind.

The experiment that proved the mechanism

In August 2025, Bedi and colleagues published a study in JAMA Network Open using a method they called NOTA: None of the Above.

The design was elegant. They took standard clinical reasoning benchmark questions and modified the answer options in one specific way: they removed the familiar diagnosis label and replaced it with a description of the same condition, or presented the same clinical scenario without the conventional label anchoring the correct answer. The clinical logic was unchanged. The underlying physiology was identical. Only the label was removed.

Claude 3.5 Sonnet's performance dropped 33.82 percent.

GPT-4o dropped 26.47 percent.

A physician would not be affected by this manipulation. The clinical features still point to the same physiological conclusion regardless of what name appears on the answer option. If you understand why a patient is hypoxic, tachycardic, and has pleuritic chest pain, you do not need the phrase "pulmonary embolism" to appear somewhere on the page to reason toward the correct management.

The LLMs do. Their performance depends on the label structure being intact. Remove the familiar label, preserve the clinical substance, and they collapse.

This is not a benchmark artifact. It is a direct demonstration of the underlying mechanism: the models are doing sophisticated pattern matching on linguistic structures, not reasoning from physiological first principles to diagnosis.

The structural gap that experience cannot close

Here is the finding that changes how we should think about AI in high-stakes clinical settings.

The physician's advantage over AI is not quantitative. It is not "more examples seen." It is architectural.

The somatic marker hypothesis, developed by Antonio Damasio through research on patients with specific brain injuries, describes a mechanism most physicians will recognize: a brain circuit encodes the consequence of past decisions as a bodily signal, biasing future choices before conscious reasoning engages.

When a physician encounters a pattern that resembles a case where someone died or was harmed by a miss, the body responds before the conscious mind does. The hands move. The attention sharpens. The threshold for ordering the test drops. This is not emotion interfering with cognition. It is consequence-weighted cognition, implemented in biology.

Paul Slovic's affect heuristic formalizes the behavioral side: affective valence attaches to representations and intensifies under time pressure. The physician who lost a patient to a missed PE does not just "remember" to check for PE. The possibility arrives weighted differently than it did before that loss.

James McGaugh showed that high-stakes events are encoded differently than routine ones: stress hormones amplify the memory trace, making it more durable and more influential on future decisions. The physician doesn't just remember the adverse case more vividly. The architecture of that memory is different, and so is its influence on every future encounter.

Second victim syndrome, documented by Wu and colleagues, captures a real behavioral consequence: physicians who cause harm and genuinely reckon with what happened often show lasting changes in how they reason about similar cases. The effect is not automatic; it depends on reflection, not just exposure. But when it takes hold, the error doesn't just inform the physician. It restructures their risk model.

This is not just theory. A prospective study of nearly 4,000 children in primary care found that when a physician's gut feeling signaled "something is wrong" despite otherwise reassuring findings, the likelihood of serious infection was 25 times higher. A separate meta-analysis of cancer consultations found that when a GP recorded a gut feeling, the odds of a cancer diagnosis were four times higher, and that predictive value rose with the physician's years of experience.

The gut feeling is not noise. In settings where physicians have received real feedback on real outcomes over years, it is a compressed signal of accumulated consequence. It is earned, not innate.

Brain imaging studies add a structural layer to this picture. Expert clinicians show different patterns of brain activation than novices doing identical diagnostic tasks, specifically in the basal ganglia and areas associated with procedural learning and emotional memory.

The basal ganglia are the procedural memory system: the same circuits that encode a tennis serve or a surgical knot also encode the radiologist's instant categorization of a lung nodule, or the anesthesiologist's response sequence before the verbal differential is formed. This is not metaphorical muscle memory. It is the literal same neural architecture.

Expert clinical knowledge has been partially transferred from the type of memory that is available to conscious inspection and expressible in language, into the type that is automatic, unavailable to introspection, and not expressible in language at all. The radiologist reading a film and the anesthesiologist reading the monitors are activating the same class of system. Neither can fully explain what they saw or why they acted.

None of this exists in current LLMs.

The process that shapes how a language model responds works like this: after training on vast amounts of text, the model is further refined by human raters who evaluate its outputs and choose which responses are better. Those raters reward clarity, helpfulness, appropriate caution. They do not reward being right when a patient's life is at stake, because there is no patient and no outcome. Only a text exchange being judged.

There is no neurohormonal consolidation. There is no circuit that amplifies the cases where the wrong answer cost someone their life. The consequence of a wrong prediction, during training, is a statistical adjustment. The consequence of a wrong clinical decision, during residency, is encoded in the physician's body and carried forward for decades.

The technical result of this difference is documented by the labs that built these systems. Before the approval-shaping process, language models express confidence that tracks their actual accuracy reasonably well. After it, that connection degrades: the model becomes more confident and less calibrated. Anthropic published this finding. OpenAI published it in the GPT-4 technical report. The physician's accumulated experience sharpens the link between confidence and accuracy. The approval process that defines modern AI deployment measurably weakens it.

Tonelli and Shapiro, writing about epistemology in medicine, make the distinction precise: experiential knowledge and propositional knowledge are categorically different. You cannot get to experiential knowledge from language alone, regardless of how much language you process.

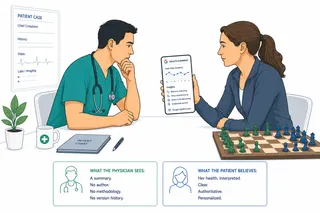

What this means for how we deploy clinical AI

This analysis does not support the conclusion that AI has no place in clinical medicine. Collaborative and distributed reasoning is already the reality of modern care. The question is which cognitive tasks should be allocated where.

AI is strongest where the training data is reliable: structured pattern matching on well-labeled clinical data, differential generation from complete documented histories, literature synthesis, protocol adherence checking. These are real contributions.

AI is weakest where the training data is corrupted and where the mechanism is wrong: novel presentations without label anchors, consequence-weighted risk recognition in ambiguous cases, the pre-verbal rapid response that happens before any documentation begins.

The design implication is specific. Keep humans in the loop for consequence-weighted decisions, not as a compliance gesture but because the human's judgment is shaped by consequences the AI has never experienced.

Evaluate AI clinical performance using tests like NOTA that probe the actual reasoning mechanism, not benchmarks where the correct answer label is already familiar to the model from training. Accuracy on familiar patterns is not the same as reasoning from clinical evidence.

And when AI-generated notes are used in training future models, account for the 74 percent fatigue signal problem. You are not creating a high-quality record of clinical reasoning. You are creating a record of what a system trained on corrupted documentation produces when asked to document.

There is a harder version of this problem arriving soon. Ambient AI scribes are already generating a significant and growing fraction of US clinical documentation. Those systems were trained on the corrupted human corpus described above. The notes they produce enter EHRs as clinical documentation, indistinguishable from human-written notes.

When those notes become training data for the next generation of clinical AI, the feedback loop closes. Researchers have demonstrated that models trained iteratively on AI-generated data experience what they called model collapse: progressive loss of rare outputs, convergence toward the most common patterns, degradation of diversity.

In clinical terms, the rare presentations, the atypical demographics, the unusual drug interactions are precisely what erodes first. Those are also exactly the cases where AI assistance would have the highest value.

By 2028 or 2030, a substantial fraction of any EHR training corpus will be AI-generated, with no provenance label to separate it from human documentation. We are not just training on notes that misrepresent how physicians think. We are approaching the point where the notes themselves have no physician in them at all.

The physician walks out of the OR and writes the note.

"Intraoperative hemodynamic instability, managed with vasopressor support."

The note is accurate. The recognition that made the hands move, the consequence-weighted pattern that fired below the threshold of language, the accumulated weight of every prior case where that waveform shape meant something, the somatic signal that demanded action before the diagnosis was conscious: none of that is in the sentence.

We trained the AI on the sentence.

Medicine lives somewhere the sentence can't go.

Yoram Friedman, MD is a physician and senior product leader working at the intersection of healthcare AI, data infrastructure, and human-centered design. He writes on healthcare AI, agentic systems, and what it actually takes for AI to move from pilots to production in regulated environments.

Sources

- Bedi N et al. "NOTA: None of the Above, Removing Diagnosis Labels from Clinical Reasoning Benchmarks." JAMA Network Open, August 2025.

- Hsu J, Obermeyer Z, Tan ZH. "Fatigue Signals in LLM-Generated Clinical Documentation." Nature Communications, July 2025.

- Wachter RM et al. "Dying of a Thousand Clicks: Doctors and Nurses Hate Electronic Medical Records." Annals of Internal Medicine, 2018. (US notes 4x longer than international peers)

- Mamykina L et al. "Learning by Example: Developing Adaptive Technology for Medical Education." JAMIA, 2012.

- Berdahl CT et al. "Emergency Physician Cognitive Load and Inaccuracy in Clinical Documentation." JAMA Network Open, 2019.

- Damasio A. Descartes' Error: Emotion, Reason, and the Human Brain. Putnam, 1994. (Somatic marker hypothesis)

- Slovic P, Finucane M, Peters E, MacGregor DG. "The Affect Heuristic." European Journal of Operational Research, 2002.

- McGaugh JL. "Memory consolidation and the amygdala: a systems perspective." Trends in Neurosciences, 2002.

- Wu AW. "Medical error: the second victim." BMJ, 2000.

- Tonelli MR, Shapiro D. "Experiential Knowledge in Clinical Medicine." Chest, 2020.

- Cuevas-Badallo A, Torres González JA. "Tacit representational knowledge in medical expertise." Medicine, Health Care and Philosophy, 2025.

- Feltovich PJ, Barrows HS. "Issues of Generality in Medical Problem Solving." Tutorials in Problem-based Learning, 1984. (Illness scripts)

- Pauker SG, Kassirer JP. "Therapeutic Decision Making: A Cost-Benefit Analysis." NEJM, 1975. (Treatment threshold model)

- Durning SJ et al. "Perspective: redefining context in the clinical encounter." Academic Medicine, 2010. (Expert fMRI studies)

- Cera ML et al. "Neural correlates of clinical reasoning expertise: an ALE meta-analysis." Frontiers in Neuroscience, 2025.

- Shumailov I et al. "The Curse of Recursion: Training on Generated Data Makes Models Forget." Nature, 2024. (Model collapse)

- Van den Bruel A et al. "Clinician gut feeling about serious infections in children." BMJ, 2012. (25-fold likelihood ratio for serious infection when gut feeling present)

- Friedemann Smith C et al. "GP gut feelings in cancer diagnosis: a meta-analysis." BJGP, 2020. (Pooled OR 4.24 for cancer when gut feeling recorded; predictive value rises with experience)

- Kadavath S et al. "Language Models (Mostly) Know What They Know." Anthropic, arXiv:2207.05221, 2022. (RLHF reduces calibration; base models better calibrated than post-trained versions)

- OpenAI. "GPT-4 Technical Report." arXiv:2303.08774, 2023. (Figure 8: post-training process reduces calibration)