What the Next Generation of Physicians Won't Remember

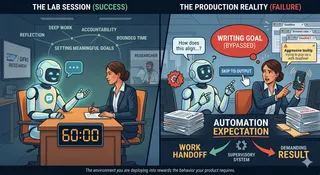

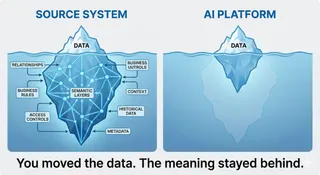

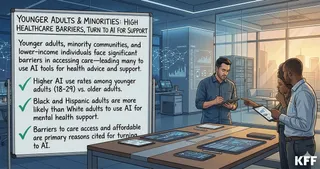

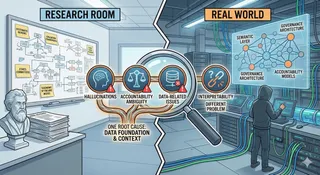

I don't remember much from school. My memory starts the day I entered clinical training. Medicine is learned by burning encounters into memory. AI is very good at removing that weight. The weight was the learning.